Need to let loose a primal scream without collecting footnotes first? Have a sneer percolating in your system but not enough time/energy to make a whole post about it? Go forth and be mid: Welcome to the Stubsack, your first port of call for learning fresh Awful you’ll near-instantly regret.

Any awful.systems sub may be subsneered in this subthread, techtakes or no.

If your sneer seems higher quality than you thought, feel free to cut’n’paste it into its own post — there’s no quota for posting and the bar really isn’t that high.

The post Xitter web has spawned soo many “esoteric” right wing freaks, but there’s no appropriate sneer-space for them. I’m talking redscare-ish, reality challenged “culture critics” who write about everything but understand nothing. I’m talking about reply-guys who make the same 6 tweets about the same 3 subjects. They’re inescapable at this point, yet I don’t see them mocked (as much as they should be)

Like, there was one dude a while back who insisted that women couldn’t be surgeons because they didn’t believe in the moon or in stars? I think each and every one of these guys is uniquely fucked up and if I can’t escape them, I would love to sneer at them.

(Semi-obligatory thanks to @dgerard for starting this)

True believers at Vox’ Future Perfect “vertical” let out a hearfelt REEEEEE as Saltman makes the obvious move to secure all the profits

https://www.vox.com/future-perfect/374275/openai-just-sold-you-out

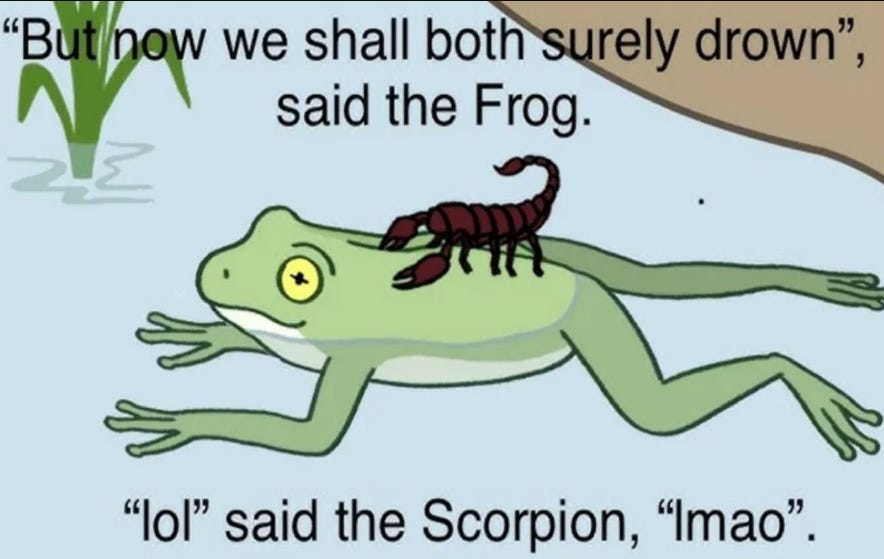

shocked that scorpions in a scorpion’s nest funded by their scorpion mates might have fallen into stinging

HN seems to be particularly deranged today, doesn’t it?

It mostly seems to be a mopey debate over whether Saltman’s impending apotheosis is good or bad.

I think they’ve been pretty sane AI-wise, lately.

you sure about that?

The most depressing thing for me is the feeling that I simply cannot trust anything that has been written in the past 2 years or so and up until the day that I die. It’s not so much that I think people have used AI, but that I know they have with a high degree of certainty, and this certainty is converging to 100%, simply because there is no way it will not. If you write regularly and you’re not using AI, you simply cannot keep up with the competition. You’re out. And the growing consensus is “why shouldn’t you?”, there is no escape from that.

This is someone who literally can’t tell good writing from bad, so he assumes everyone is using AI

He’s so close to being depressed enough to maybe ask a vital and important question about meaning and his own relationships with technology. But probably he’ll just buy more AI.

Watching AI guys slowly come around feels similar to how people have to care for their alcoholic relatives. Folks have to come to the point where they recognize where the unacceptable bullshit lies on their own, then you can show them the cold hard facts and a path back to the real world, but getting there is absolutely exhausting and often heartbreaking.

Inventor sez “I locked myself in my apartment for 4 years to build this humanoid”. Surprisingly, not a sexbot!

not a sexbot!

Skill issue.

You know that’s for Gen 2.0

This tech curve is about to go exponential, if you know what I’m sayin’

–venture capitalists, probably

all hail the hockey stick may we forever outspend all competition and reap the rewards of a ravaged market we solely control

Was salivating all weekend waiting for this to drop, from Subbarao Kambhampati’s group:

Ladies and gentlemen, we have achieved block stacking abilities. It is a straight shot from here to cold fusion! … unfortunately, there is a minor caveat:

Looks like performance drops like a rock as number of steps required increases…

correct me if I’m reading this wrong — the results are that LLMs are much, much worse than classical AI at planning block placement for SHRDLU? that seems pretty damning

Yes, the classical algo achieves perfect accuracy and is way faster. There is also a table that shows the cost of running o1 is enormous. Like comically bad. Boil a small ocean bad. We’ll just 10x the size and it will achieve 15 steps inshallah.

Imo, this is like the same behavior we see on math problems. More steps it takes, the higher the chance it just decoheres completely. I can’t see any reason why this type of thing would just “click” for the models if they are also unable to do multiplication.

I mean this just reeks of pure hopium from OAI and co that things will magykly work out. (But the newer model is clearly better^{tm}! I still don’t see any indication that one day that chart is just going to be 100s across the board.)

Large Reasoning Models

May the coiners of this jargon step on Lego until the end of days