Over half of all tech industry workers view AI as overrated::undefined

Over half of tech industry workers have seen the “great demo -> overhyped bullshit” cycle before.

You just have to leverage the agile AI blockchain cloud.

Once we’re able to synergize the increased throughput of our knowledge capacity we’re likely to exceed shareholder expectation and increase returns company wide so employee defecation won’t be throttled by our ability to process sanity.

Sounds like we need to align on triple underscoring the double-bottom line for all stakeholders. Let’s hammer a steak in the ground here and craft a narrative that drives contingency through the process space for F24 while synthesising synergy from a cloudshaping standooint in a parallel tranche. This journey is really all about the art of the possible after all so lift and shift a fit for purpose best practice and hit the ground running on our BHAG.

<3

What is this and how can I invest

I’m calling HR

😜

Don’t forget to make it connected to every device, ever

AIot?

Every billboard in SF is just these words shuffled

No SQL, block chain, crypto, metaverse, just to name a few recent examples.

AI is overhyped, but it is, so far, more useful than any of those other examples, though.

These are useful technologies if used when called for. They aren’t all in one solutions like the smart phone killing off cameras, pdas, media players… I think if people looked at them as tools which fix specific problems, we’d all be happier.

Every year sometimes.

Best assessment I’ve heard: Current AI is an aggressive autocomplete.

Nice one! I have heard it called a fuzzy JPG of the internet.

And that’s entirely correct

No. It’s not and hasn’t been for at least a year. Maybe the ai your dealing with is, but it’s shown understanding of concepts in ways that make no sense for how it was created. Gotta go.

Maybe if you Interpret it’s output as such.

Too bad it’s bullshit.

If you are actually interested in the topic, here’s a few good reads:

-

Do Large Language Models learn world models or just surface statistics? (Jan 2023)

-

Actually, Othello-GPT Has A Linear Emergent World Representation (Mar 2023)

-

Eight Things to Know about Large Language Models (April 2023)

-

Playing chess with large language models (Aug 2023)

-

Language Models Represent Space and Time (Oct 2023)

As you can see, the past year has shed a lot of light on the topic.

One of my favorite facts is that it takes on average 17 years before discoveries in research find their way to the average practitioner in the medical field. While tech as a discipline may be more quick to update itself, it’s still not sub-12 months, and as a result a lot of people are continuing to confidently parrot things that have recently been shown in research circles to be BS.

-

Largely because we understand that what they’re calling “AI” isn’t AI.

AI doesn’t necessarily mean human-level intelligence, if that’s what you mean. The AI field has wrestled with this for decades. There can be “strong AI”, which is aiming for that human-level intelligence, but that’s probably a far off goal. The “weak AI” is about pushing the boundaries of what computers can do, and that stuff has been massively useful even before we talk about the more modern stuff.

Sounds like people here are expecting to see GPAI and singularity stuff, but all they see is a pitiful LLM or other even more narrow AI applications. Remember, even optical character recognition (OCR) used to be called AI until it became so common that it wasn’t exciting any more. What AI developers call AI today, is just basic automation and few decades later.

Yup. LLM RAG is just search 2.0 with a GPU.

For certain use cases it’s incredible, but those use cases shouldn’t be your first idea for a pipeline

Given that AI isn’t purported to be AGI, how do you define AI such that multimodal transformers capable of developing abstract world models as linear representations and trained on unthinkable amounts of human content mirroring a wide array of capabilities which lead to the ability to do things thought to be impossible as recently as three years ago (such as explain jokes not in the training set or solve riddles not in the training set) isn’t “artificial intelligence”?

THANK YOU! I’ve been saying this a long time, but have just kind of accepted that the definition of AI is no longer what it was.

It is overrated. At least when they look at AI as some sort of brain crutch that redeems them from learning stuff.

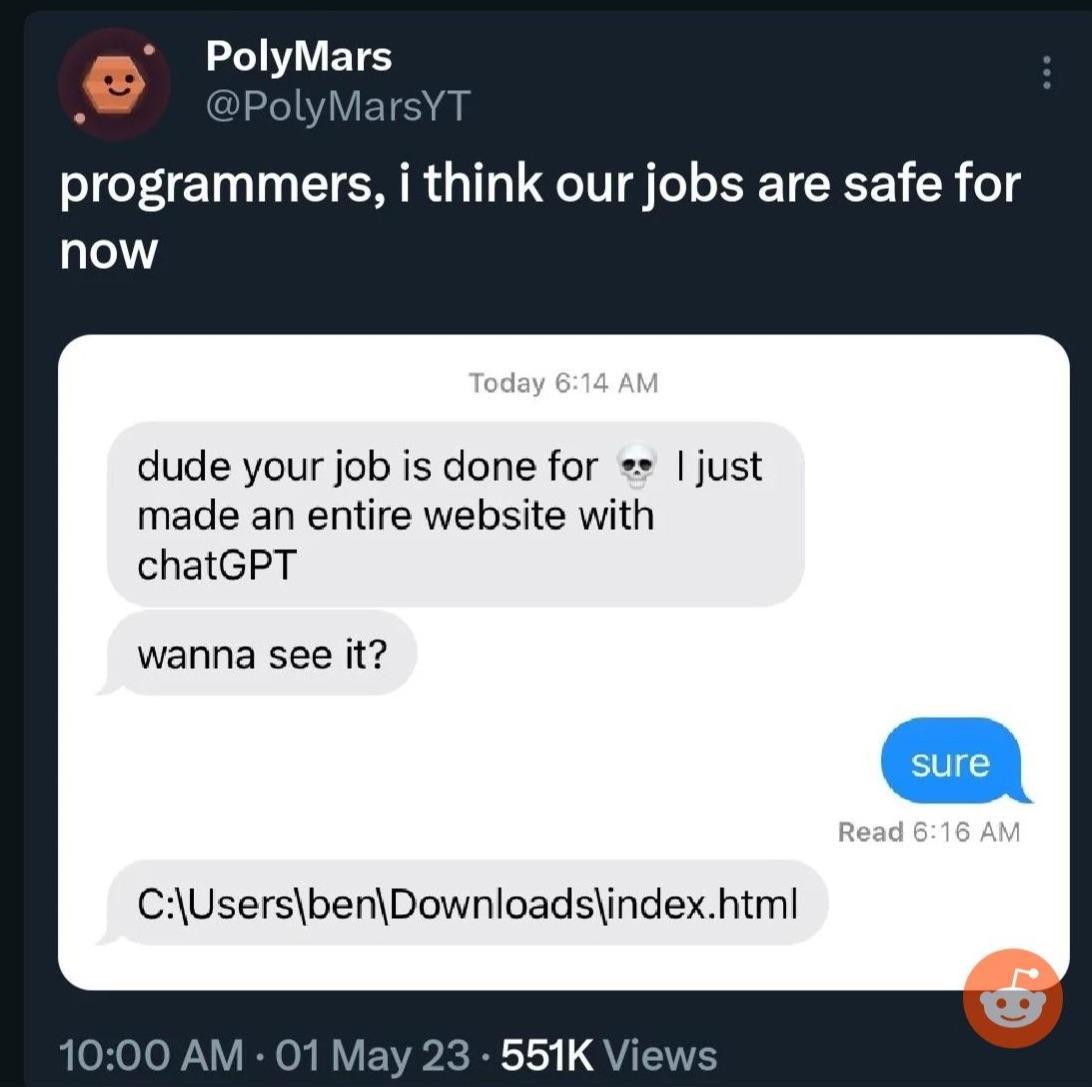

My boss now believes he can “program too” because he let’s ChatGPT write scripts for him that more often than not are poor bs.

He also enters chunks of our code into ChatGPT when we issue bugs or aren’t finished with everything in 5 minutes as some kind of “Gotcha moment”, ignoring that the solutions he then provides don’t work.

Too many people see LLMs as authorities they just aren’t…

It bugs me how easily people (a) trust the accuracy of the output of ChatGPT, (b) feel like it’s somehow safe to use output in commercial applications or to place output under their own license, as if the open issues of copyright aren’t a ten-ton liability hanging over their head, and © feed sensitive data into ChatGPT, as if OpenAI isn’t going to log that interaction and train future models on it.

I have played around a bit, but I simply am not carefree/careless or am too uptight (pick your interpretation) to use it for anything serious.

Too many people see LLMs as authorities they just aren’t…

This is more a ‘human’ problem than an ‘AI’ problem.

In general it’s weird as heck that the industry is full force going into chatbots as a search replacement.

Like, that was a neat demo for a low hanging fruit usecase, but it’s pretty damn far from the ideal production application of it given that the tech isn’t actually memorizing facts and when it gets things right it’s a “wow, this is impressive because it really shouldn’t be doing a good job at this.”

Meanwhile nearly no one is publicly discussing their use as classifiers, which is where the current state of the tech is a slam dunk.

Overall, the past few years have opened my eyes to just how broken human thinking is, not as much the limitations of neural networks.

It is overrated. It has a few uses, but it’s not a generalized AI. It’s like calling a basic calculator a computer. Sure it is an electronic computing device and makes a big difference in calculating speed for doing finances or retail cashiers or whatever. But it’s not a generalized computing system that can basically compute anything that it’s given instructions for which is what we think of when we hear something is a “computer”. It can only do basic math. It could never be used to display a photo , much less make a complex video game.

Similarly the current thing that’s called “AI”, can learn in a very narrow subject that it is designed for. It can’t learn just anything. It can’t make inferences beyond the training material or understand. It can’t create anything totally new, it just remixes things. It could never actually create a new genre of games with some kind of new interface that has never been thought of, or discover the exact mechanisms of how gravity works, since those things aren’t in its training material since they don’t yet exist.

Some calculators can run DooM, though

Lol, those are different. I meant like a little solar powered addition, subtraction, multiplication, division and that’s it kind of calculator.

Many areas of machine learning, particularly LLMs are making impressive progress but the usual ycombinator techbro types are over hyping things again. Same as every other bubble including the original Internet one and the crypto scams and half the bullshit companies they run that add fuck all value to the world.

The cult of bullshit around AI is a means to fleece investors. Seen the same bullshit too many times. Machine learning is going to have a huge impact on the world, same as the Internet did, but it isn’t going to happen overnight. The only certain thing that will happen in the short term is that wealth will be transferred from our pockets to theirs. Fuck them all.

I skip most AI/ChatGPT spam in social media with the same ruthlessness I skipped NFTs. It isn’t that ML doesn’t have huge potential but most publicity about it is clearly aimed at pumping up the market rather than being truly informative about the technology.

I think it will be the next big thing in tech (or “disruptor” if you must buzzword). But I agree it’s being way over-hyped for where it is right now.

Clueless executives barely know what it is, they just know they want it get ahead of it in order to remain competitive. Marketing types reporting to those executives oversell it (because that’s their job).

One of my friends is an overpaid consultant for a huge corporation, and he says they are trying to force-retro-fit AI to things that barely make any sense…just so that they can say that it’s “powered by AI”.

On the other hand, AI is much better at some tasks than humans. That AI skill set is going to grow over time. And the accumulation of those skills will accelerate. I think we’ve all been distracted, entertained, and a little bit frightened by chat-focused and image-focused AIs. However, AI as a concept is broader and deeper than just chat and images. It’s going to do remarkable stuff in medicine, engineering, and design.

Personally, I think medicine will be the most impacted by AI. Medicine has already been increasingly implementing AI in many areas, and as the tech continues to mature, I am optimistic it will have tremendous effect. Already there are many studies confirming AI’s ability to outperform leading experts in early cancer and disease diagnoses. Just think what kind of impact that could have in developing countries once the tech is affordably scalable. Then you factor in how it can greatly speed up treatment research and it’s pretty exciting.

That being said, it’s always wise to remain cautiously skeptical.

I have a doctorate in computer engineering, and yeah it’s overhyped to the moon.

I’m oversimplifying it and some one will ackchyually me but once you understand the core mechanics the magic is somewhat diminished. It’s linear algebra and matrices all the way down.

We got really good at parallelizing matrix operations and storing large matrices and the end result is essentially “AI”.

Big emphasis on the ‘A’

I remember when it first came out I asked it to help me write a MapperConfig custom strategy and the answer it gave me was so fantastically wrong - even with prompting - that I lost an afternoon. Honestly the only useful thing I’ve found for it is getting it to find potential syntax errors in terraform code that the plan might miss. It doesn’t even complement my programming skills like a traditional search engine can do; instead it assumes a solution that is usually wrong and you are left to try to build your house on the boilercode sand it spits out at you.

It’s a general problem with ChatGPT(free), the more obscure the topic, the more useless the answers will be. It works pretty good for Wikipedia-style general knowledge, but everything that goes even a little deeper is a mess. This is true even when it comes to things that shouldn’t be that obscure, e.g. pop-culture things like movies. It can give you a summary of StarWars, but anything even a little more outside the mainstream it makes up on the spot.

How much better is ChatGPT-Pro when it comes to this? Can it answer /r/tipofmytongue/ style question?

I’ve found the free one can sometimes answer tip of my tongue questions but yeah anything even remotely obscure it will just lie and say that doesn’t exist, especially if you stray a little too close to the puritanical guard rails. One time I was going down a rabbit hole researching human sex organ variations and it flat out told me the people in South America who grow a penis at 12 don’t exist until I found the name guevedoces on my own, and wouldn’t you know it then it knew what I was talking about.

Have you used copilot? I find it to be fantastically useful.

I also have tried to use it to help with programming problems, and it is confidently incorrect a high percentage (50%) of the time. It will fabricate package names, functions, and more. When you ask it to correct itself, it will give another confidently incorrect answer. Do this a few more times and you could end up with it suggesting the first incorrect answer it gave you and then you realize it is literally leading you in circles.

It’s definitely a nice option to check something quickly, and it has given me some good information, but you really can’t blindly trust its output.

At least with programming, you can validate fairly quickly that it is giving bad information. With other real-life applications, using it for cooking/baking, or trip planning, the consequences of bad information could be quite a bit worse.

That’s because it is overrated and the people in the tech industry are actually qualified to make that determination. It’s a glorified assistant, nothing more. we’ve had these for years, they’re just getting a little bit better. it’s not gonna replace a network stack admin or a programmer anytime soon.

Reality: most tech workers view it as fairly rated or slightly overrated according to the real data: https://www.techspot.com/images2/news/bigimage/2023/11/2023-11-20-image-3.png

Which is fair. AI at work is great but it only does fairly simple things. Nothing i can’t do myself but saves my sanity and time.

It’s all i want from it and it delivers.

Slightly overrated is where I would put it, absolutely. It’s overhyped, but god if the recent advancements aren’t impressive.

I work in AI, and I think AI is overrated.

It is currently overhyped and so much of it just seems to be copying the same 3 generative AI tools into as many places as possible. This won’t work out because it is expensive to run the AI models. I can’t believe nobody talks about this cost.

Where AI shines is when something new is done with it, or there is a significant improvement in some way to an existing model (more powerful or runs on lower end chips, for example).

In a podcast I listen to where tech people discuss security topics they finally got to something related to AI, hesitated, snickered, said “Artificial Intelligence I guess is what I have to say now instead of Machine Learning” then both the host and the guest started just belting out laughs for a while before continuing.

I’ll join in on the cacophony in this thread and say it truly is way overrated right now. Is it cool and useful? Sure. Is it going to replace all of our jobs and do all of our thinking for us from now on? Not even close.

I, as a casual user, have already noticed some significant problems with the way that it operates such that I wouldn’t blindly trust any output that I get without some serious scrutiny. AI is mainly being pushed by upper management-types who don’t understand what it is or how it works, but they hear that it can generate stuff in a fraction of the time a person can and they start to see dollar signs.

It’s a fun toy, but it isn’t going to change the world overnight.